Guest Op-Ed: Sam Moseti

At Africa Media Festival 2026, I gave an ‘Ignite talk’ arguing that AI transparency matters for media freedom. I built my case with 5 accretive points contributing to the through line that AI opacity limits the capacity of media to interrogate AI power & systems, and increases vulnerability to delegitimisation. Let’s break this down.

1. The nexus of Transparency, Media Freedom and AI

Beyond the absence of active censorship, for media freedom to exist, journalists must have the capacity and reasonable access to enable interrogation in the worthy undertaking of keeping power in check. To exercise this right, media has always demanded transparency from governments, and other institutions. Freedom of information legislation and open-data regimes provide the legal right to obtain documents and datasets, forming the infrastructure that allows journalists to investigate and verify claims. This is a key cornerstone of a free press. Conversely, consistent scrutiny, exposure of corruption and policy explainers creates ‘accountability pressure’ on governments and institutions to disclose information and justify decisions. I argue then that Institutional transparency, therefore, exists in a mutually reinforcing cycle with media freedom.

Today, AI is fast becoming both institutional and infrastructural power across systems and sectors. AI is no longer a ‘tool,’ it now shapes how institutions function, who can act, and what kinds of decisions are even possible. In practice, AI is increasingly ‘siting’ inside workflows, public administration, cloud platforms, and governance systems, so it starts to structure authority. For media itself, the conversation on how AI is reshaping journalism is so rampant — there is hardly a media conference since 2024 without this as a core theme. And so:

2. AI transparency in Media

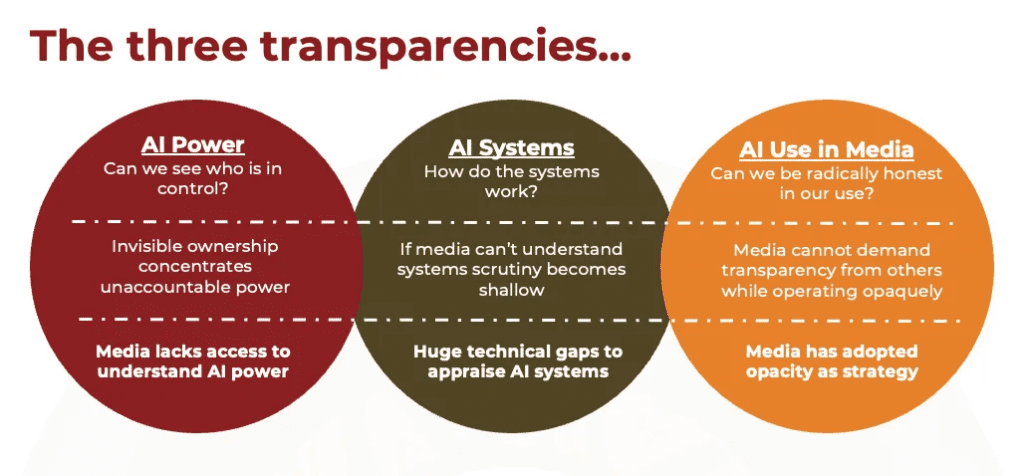

As this conversation is still nascent in the industry, there’s no one definition for AI transparency in media. However, any definition you use is likely to fit into three buckets/boxes: Power, Systems and Use in media.

- For media to keep AI power in check, journalists must understand its ownership, funding, governance, and even incentives that keep AI running. Invisible ownership concentrates unaccountable power. Media cannot hold any power to account if it can not see who is in power.

- When it comes to AI systems, media must understand how AI works. We need to go beyond surface-level awareness to deep comprehension of Algorithms, Generative logic, Training Data & Processes, Moderation methodologies, among other systems. If media can’t understand AI systems, then any scrutiny is doomed to be shallow.

Media’s understanding of AI power and systems must encompass not only ‘who owns it’ and ‘how it works’ but also ‘who is affected, why and how’ This must happen both at a societal level, and at the industry level too. As media navigates the ‘AI revolution’ it is important to also question how AI is rapidly changing how media/news is received, consumed and appraised. Unfortunately, media, especially public interest media in the global south is often unable to fully examine AI power and systems due to access issues and technical gaps.

- Separately, it would be mighty pretentious to call out AI power and systems without media being radically honest about its own AI use. Media cannot demand transparency from others while operating opaquely itself. Media has greater control over this particular facet (isn’t it simply disclosing how you used AI for a particular piece?), yet it remains highly contested. Research shows that 81% of journalists across various regions are currently using generative AI tools in their professional tasks (1), yet very few disclose this use. Media seems to have adopted opacity as strategy, mainly to prevent being seen as ‘lazy’ by audiences. And that’s how you end up with embarrassing published pieces with some very obvious obvious AI prompts (2) And then audiences find out…

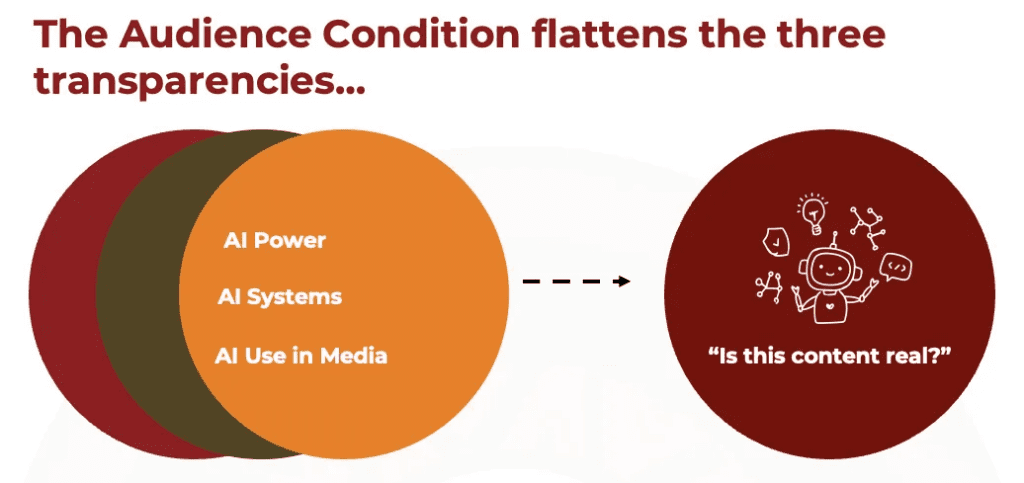

3. The Audience Condition

Media exists for audiences, and trust is a key theme for media freedom. Audiences must trust content from media for any accountability pressure on institutions to occur. Otherwise, when deemed ‘untrustworthy’ a media outlet/journalist becomes easy to delegitimise. Trust, therefore, is the greatest tension to why AI transparency matters for media freedom. So, how are audiences meeting this AI moment?

I argue that the audience condition flattens the three transparencies mainly because they are not encountering AI power or systems per se; they are encountering outputs (from self-directed exchanges with AI), and content (externally delivered, sometimes by media). As they interact with these outputs and AI-Generated content, audiences are increasingly realising the vast abilities of AI in generating images, text and fabricating voices and video. They understand AI can do these things fast, and it is likely then, that media makers would use it in their work.

At the same time, audiences lack insight into the three AI transparencies (Point 2 above) which would have otherwise offered them proper anchors for evaluation.

In the current environment where AI content has significantly saturated the information ecosystem, and without anchors for evaluation, suspicion has become the new normal. RNW Media’s Hype & Hesitation research with young audiences shows that young people aged 18–35 are deep skeptics of AI content and are associating unlabelled or opaque AI use with misinformation, bias, and erosion of authenticity. The big question they ask as they are consuming any content is “Is this real?” or now more commonly, “Is this AI?”

While there are several theories to how audiences respond to this question when left to their own devices, I think motivated reasoning and AI-self efficacy are the most potent.

- Motivated reasoning is the idea thatpeople appraise AI-generated content based on belief-driven judgement, i.e. People will appraise content that is in line with their beliefs as true, and vice versa.

- On the technical side, AI self-efficacy is about the belief that one can spot AI content whether they have interacted with AI or not(3) As audiences interact with AI more, the higher the self-efficacy, however, as AI gets better, it is harder now to tell what is AI and what isn’t.

As audiences use motivated reasoning and AI-self efficacy to asses content, it becomes easier for them to accept disinformation when it is comfortable, and to reject dislikable truth by labelling it “AI”. To illustrate this, here is a Case Study from Sudan on Hmedti’s death propaganda:

During the early days of the conflict, Sudanese Army Forces (SAF) supporters campaigned that Hmedti (military head of the paramilitary RSF – Rapid Support Forces) was dead, claiming that all evidence of his life was fake and AI-generated, leading the public to believe in his death, despite deepfake detection rejecting this claim. While there were many narratives (e.g., alternative character, Hmedti’s twin), humanoid robots and AI-generated footage were among the notable ones. This incident has been influential on the public perception of AI deepfakery, especially since notable politicians have confirmed it. In their investigation of the psychological impact of Hmedti’s death disinformation, Beam Reports (2025) found that 33.1% (n=557) felt happy after hearing Hmedti’s death news. They also found that among those who have seen a video of him afterwards (n=378), 52.6% believed the video was real, and the remaining 47.4% either distrusted the video or were uncertain about it.

*As presented in Perceived and Actual AI Deepfakes: The Case of Sudan by Eilaf Salaheldeen Ahmed Mohamed. Published as part of the 2025 AMEL Sudan Democracy Lifeline Fellowship.(4)

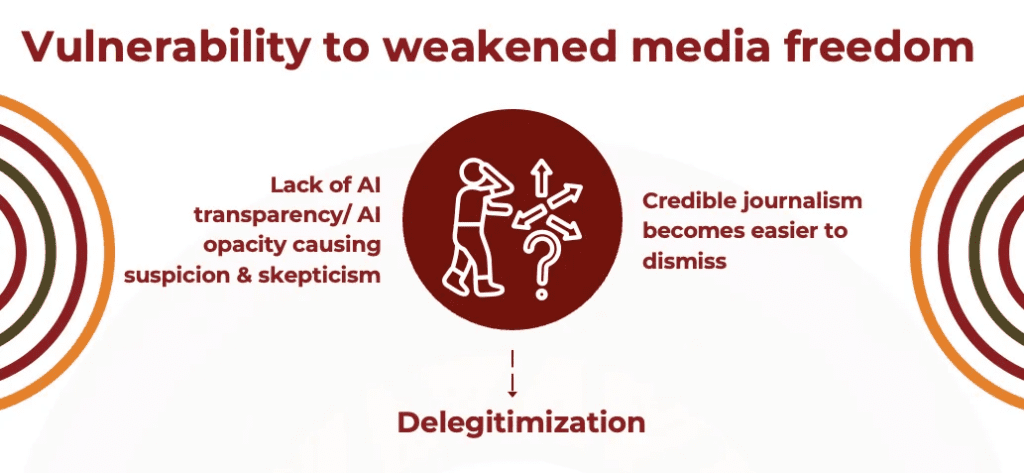

4. A deficiency in AI transparency

I call deficiency of AI transparency, AI Opacity. This includes invisible ownership structures, hard to understand AI systems and failure to disclose use AI use in media-making. AI opacity has some devastating effects on media freedom:

- The audience condition already shows that AI opacity denies audiences proper anchors for evaluation, i.e. instead of evaluating content through diverse, informed perspectives, audiences are forced to rely on their own motivated reasoning and AI-self efficacy. Hmedti’s case study shows that these individual level factors could lead to wrong appraisal. Trust is then destabilised.

- Further, in an information ecosystem where skepticism and suspicion are the default position, consistent opacity and lack of nuance amplify doubt. Trust is once again destabilised. In doubt, credible journalism is easier to dismiss, verification loses its power and media authority weakens.

- With trust destabilised is becomes easy for authoritarian governments and corrupt institutions to delegitimise media simply by coercing the audience’s motivated reasoning and potentially manipulating their AI-self efficacy. Today, delegitimisation does not require banning journalists, it’s as easy as labelling an outlet’s content as ‘AI’.

5. What needs to happen?

My final argument is that for media to fulfil that worthy undertaking of keeping power in check in the current day, it must respond to the AI moment by seeking AI transparency fast. Media has the responsibility to stabilise evaluation and provide anchors for audiences in an AI-saturated environment in order to redeem/rebuild lost trust. Ultimately,

IF AI is fast becoming infrastructural and institutional power;

AND AI opacity destabilises trust/evaluation;

THEN media must interrogate AI power and systems and practice radical transparency about its own use.

*Here’s my own transparency – I used several generative AI tools to put together this presentation. As the through line was drawn from three different research studies, I used Notebook LM and ChatGPT to refine the logic and to polish language. Ideation for the visuals & abstract art in the presentation were done with Canva AI. This article, however, is completely human written.

References

- Research by Thomson Reuters Foundation – https://www.trust.org/wp-content/uploads/2025/01/TRF-Insights-Journalism-in-the-AI-Era.pdf

- Example – https://www.facebook.com/whownskenya/posts/the-standard-newspaper-known-for-criticism-of-leaders-has-been-exposed-for-using/1438599497598120/

- Liu, Q., Wang, L. & Luo, M. When seeing is not believing: self-efficacy and cynicism in the era of intelligent media. Humanit Soc Sci Commun 12, 274 (2025). https://doi.org/10.1057/s41599-025-04594-5

- https://democracyactionsd.org/en/perceived-and-actual-ai-deepfakes-the-case-of-sudan/